8.7 KiB

| abstract | lastupdated |

|---|---|

| Programming sound in Live-Sequencer and ChucK | 2013 |

Sound Programming

2013.

Much can be programmed, and that includes sound. In the digital world, sound is typically represented by sequences of about 90 kB per second, so "printing" sound is merely a matter of printing bytes. As such, any general purpose language can be used to generate sounds.

However, it's boring to create a program that does nothing but print bytes, and it's potentially difficult to make those bytes sound nice; we want abstractions to simplify matters for us: instruments, drums, musical notes, and a high-level program structure. Many programming languages have good libraries that allow us to achieve just that, but to keep it simple we'll focus on how to program sound in two languages designed to output sound: ChucK and Live-Sequencer.

Let's create some sounds.

The square wave

We'll start with ChucK and a small square wave program:

// Connect a square oscillator to the sound card.

SqrOsc s => dac;

// Set its frequency to 440 Hz.

440 => s.freq;

// Lower the volume.

0.1 => s.gain;

// Let it run indefinitely.

while (true) {

1000::second => now;

}

ChucK is an imperative language. Instructions on how to install and run it can be found on its website, along with other useful information. You can listen to the above sound here.

To do the same in Live-Sequencer, we must find a square wave "instrument" and use that.

module SquareWave where

-- Basic imports.

import Prelude

import List

import Midi

import Instrument

import Pitch

-- The function "main" is run when the program is run.

-- It returns a list of MIDI actions.

main = concat [ program lead1Square -- Use a square wave instrument.

, cycle ( -- Keep playing the following forever.

note 1000000 (a 4) -- Play 1000000 milliseconds of the musical note A4

) -- about 440 Hz.

]; -- End with a semicolon.

Live-Sequencer differs from ChucK in that it is functional, but another major difference is that while ChucK (in general) generates raw sound bytes, Live-Sequencer generates so-called MIDI codes, which another program converts to the actual audio. Live-Sequencer has a couple of funky features such as highlighting which part of one's program is played; read about it and how to install and run it at this wiki. You can listen to the above sound here.

Something more advanced

Let's try to create a small piece of music which can be expressed easily in Live-Sequencer (listen here):

module MelodyExample where

import Prelude

import List

import Midi

import Instrument

import Pitch

-- Durations (in milliseconds).

en = 100;

qn = 2 * en;

hn = 2 * qn;

wn = 2 * hn;

twice x = concat [x, x];

main = cycle rpgMusic;

rpgMusic = concat [ partA g

, [Wait hn]

, twice (partB [b, d])

, partB [a, c]

, partA b

];

partA t = concat [ program frenchHorn

, mel2 c e 4

, mel2 c e 5 -- The '=:=' operator merges two lists of actions

=:= -- so that they begin simultaneously.

(concat [ [Wait wn]

, mel2 d t 3

])

];

partB firsts = concat [ program trumpet

, concat (map mel0 [c, e])

=:=

mergeMany (map mel1 firsts)

];

-- Instrument-independent melodies.

mel0 x = concat [ note wn (x 3)

, note hn (x 4)

, note en (x 2)

, [Wait wn]

, twice (note qn (x 2))

];

mel1 x = concat [ note (wn + hn) (x 5)

, note (hn + qn) (x 4)

];

mel2 x y n = concat [ twice (note qn (x 3))

, concatMap (note hn . y) [3, 4, 4]

, note wn (x n) =:= note wn (y n)

];

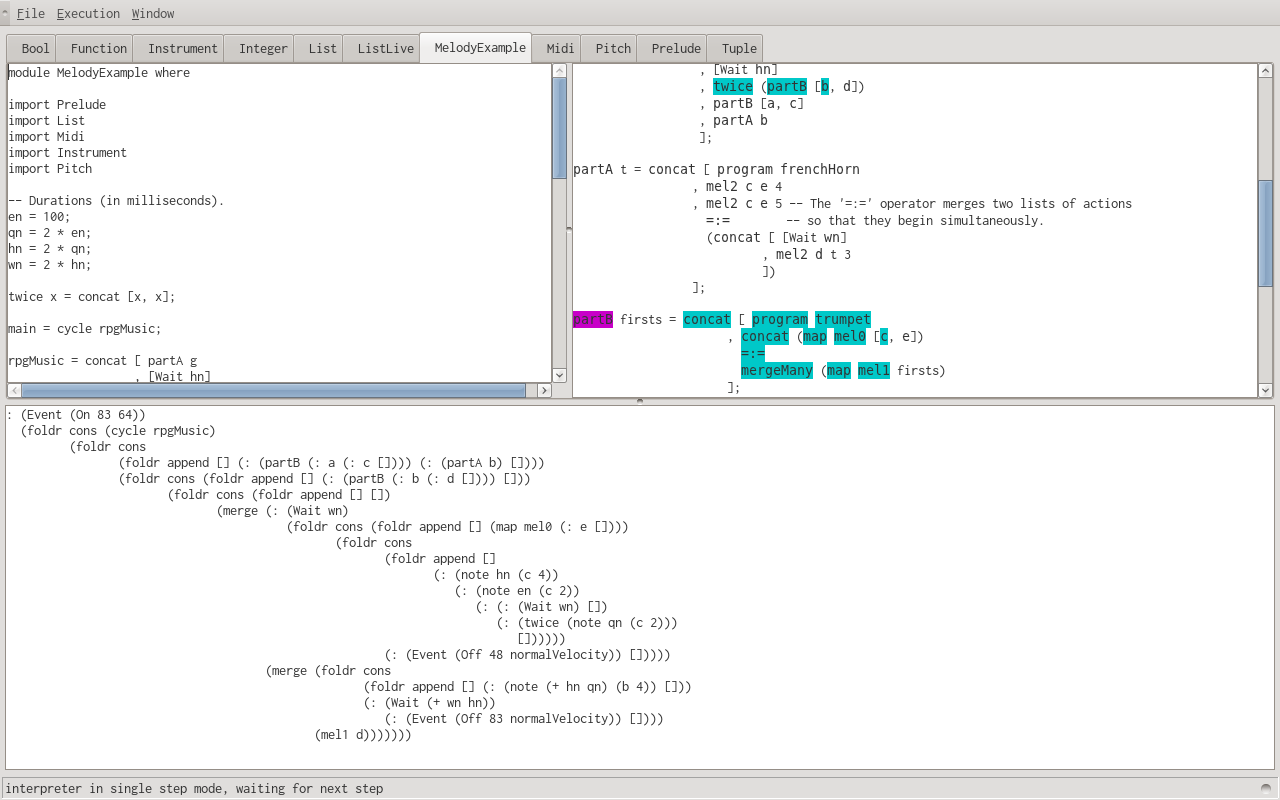

When you play the program from the Live-Sequencer GUI, the code in use is highlighted:

The same could be expressed in ChucK, but the comparison wouldn't be fair. While Live-Sequencer is designed for describing melodies, ChucK's purpose is sound synthesis, which is more general. We'll create something more fitting of ChucK's capabilities, while still focusing on the use of instruments (listen here):

// Background music for an old sci-fi horror B movie.

// Filters.

Gain g;

NRev reverb;

// Connect the Gain to the sound card.

g => dac;

// Connect the data sent to the sound card through the reverb filter back to the

// sound card.

adc => reverb => dac;

// Instruments.

Mandolin mandolin;

0.2 => mandolin.gain;

Sitar sitar;

0.8 => sitar.gain;

Moog moog;

// Instrument connections to the Gain.

mandolin => g;

sitar => g;

moog => reverb => g;

// Play a frequency 'freq' for duration 'dura' on instrument 'inst'.

fun void playFreq(StkInstrument inst, dur dura, int freq) {

freq => inst.freq; // Set the frequency.

0.1 => inst.noteOn; // Start playing with a velocity of 0.1.

dura => now;

0.1 => inst.noteOff; // Stop playing.

}

// Play a melody.

fun void a_melody(StkInstrument inst, int freq_offset) {

int i;

// Fork the command to play "in the background".

spork ~ playFreq(moog, 600::ms, 400 - freq_offset);

for (0 => i; i < 3; i++) {

playFreq(inst, 200::ms, 220 + freq_offset + 10 * i);

}

// Create an array and use every element in it.

[3, 4, 4, 5, 3] @=> int ns[];

for (0 => i; i < ns.cap(); i++)

{

playFreq(inst, 100::ms, ns[i] * 132 + freq_offset);

}

}

// Infinite sound loop of pseudo-random frequencies.

while (true) {

spork ~ a_melody(moog, Math.random2(0, 30));

Math.random2f(0.4, 0.9) => g.gain; // Adjust the gain.

a_melody(mandolin, Math.random2(0, 350));

a_melody(sitar, Math.random2(200, 360));

}

Algorithmic composition

Why not have the computer generate the melody as well as the sound? That sounds like a great idea!

Enter [L-systems](https: / / en.wikipedia.org / wiki / L-system). An L-system has an alphabet and a set of rules, where each rule is used to transform the symbol on the left-hand side to the sequence of symbols on the right-hand side. We'll use this L-system to generate music:

-- Based on https://en.wikipedia.org/wiki/L-system#Example_7:_Fractal_plant

Alphabet: X, F, A, B, P, M

Rules:

X -> FMAAXBPXBPFAPFXBMX

F -> FF

If we evaluate a L-system on a list, the system's rules are applied to each element in the list, and results are concatenated to make a new list. If we assign each symbol to a sequence of sounds and run the L-system a few times, we get this.

module LSystem where

import Prelude

import List

import Midi

import Instrument

import Pitch

en = 100;

qn = 2 * en;

hn = 2 * qn;

wn = 2 * hn;

-- Define the L-System.

data Alphabet = X | F | A | B | P | M;

expand X = [F, M, A, A, X, B, P, X, B, P, F, A, P, F, X, B, M, X];

expand F = [F, F];

expand _ = [];

-- Map different instruments to different channels.

channelInsts = concat [ channel 0 (program celesta)

, channel 1 (program ocarina)

, channel 2 (program sitar)

];

-- Define the mappings between alphabet and audio.

interpret X = [Wait hn];

interpret F = channel 0 (note qn (c 4));

interpret A = channel 1 (note qn (a 5));

interpret B = channel 1 (note qn (f 5));

interpret P = channel 2 (note hn (a 3));

interpret M = channel 2 (note hn (f 3));

-- A lazily evaluated list of all iterations.

runLSystem expand xs = xs : runLSystem expand (concatMap expand xs);

-- The sound from a L-System.

getLSystemSound expand interpret iterations start

= concatMap interpret (runLSystem expand start !! iterations);

-- Use the third iteration of the L-System, and start with just X.

main = channelInsts ++ getLSystemSound expand interpret 3 [X];

Using an L-system is one of many ways to take composition to a high level. L-systems can be used to generate fractals, which are nice.

And so on

Many abstractions in sound generation allow for fun sounds to happen. Interested people might want to also take a look at e.g. Euterpea, Pure Data, or Csound.

Originally published here.